Apache Iceberg

Apache Iceberg is an open table format for large-scale analytics data. Dremio uses Iceberg as its native table format, supporting full DML operations, schema evolution, time travel, and table optimization on v2 and v3 tables.

Dremio works with Iceberg tables through supported catalogs, including AWS Glue Data Catalog, Iceberg REST Catalog, Snowflake Open Catalog, and Unity Catalog. See Add Your Data for catalog configuration details.

Iceberg v3 Preview

Although v2 remains the default format version in Dremio, Iceberg v3 introduces performance optimizations and new data types for Iceberg tables. Key v3 features include:

- Deletion vectors — compact bitmaps that replace v2 position delete files, avoiding costly rewrites and significantly improving merge-on-read performance.

- Variant type — flexible storage for semi-structured JSON data without a fixed schema, making it simpler to query complex nested formats.

- Row lineage — automatic row-level change tracking that strengthens auditability and simplifies debugging and compliance workflows.

- Default column values — automatic values for new columns not specified during inserts, simplifying schema evolution without breaking existing pipelines.

There are two ways to create a v3 table:

-

Explicit: Set the

Example: Create a v3 table explicitlyformat-versiontable property to3.Example: Upgrading a v2 table to v3CREATE TABLE my_catalog.db.events (

event_id BIGINT,

event_time TIMESTAMP,

payload VARCHAR

) TBLPROPERTIES ('format-version' = '3');ALTER TABLE my_table SET TBLPROPERTIES ('format-version' = '3'); -

Implicit: Use a v3 feature such as a VARIANT column in your table definition. When Dremio detects a v3 feature, it automatically creates the table as v3.

Example: Create a v3 table implicitly via VARIANTExample: Adding a v3 feature upgrades the table to v3CREATE TABLE my_catalog.db.events (

event_id BIGINT,

event_time TIMESTAMP,

payload VARIANT

);ALTER TABLE my_table ADD COLUMNS (payload VARIANT);

Once a table is upgraded to v3, it cannot be downgraded to v2. The upgrade is a metadata-only operation—if it fails, your table remains v2. However, once the upgrade succeeds, v3 features such as row lineage are permanently enabled and the table cannot be reverted.

Deletion Vectors

Iceberg v3 changes how Dremio tracks row-level deletes for operations configured to use merge-on-read mode, delivering significant query performance improvements.

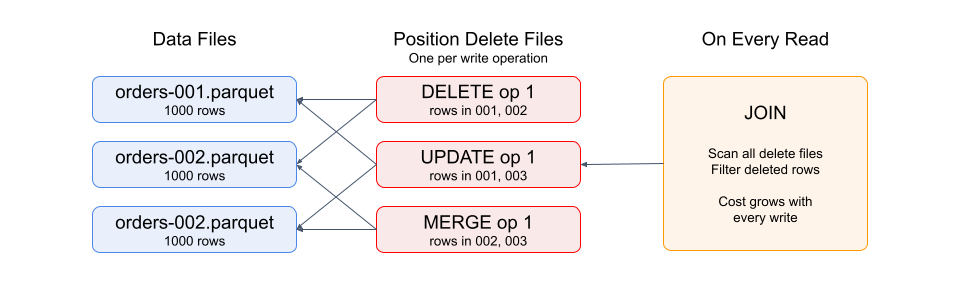

In v2, merge-on-read operations store deleted row positions in separate Parquet files. During reads, Dremio must scan these delete files and join them with data files to filter out deleted rows—an operation that becomes increasingly expensive as delete files accumulate.

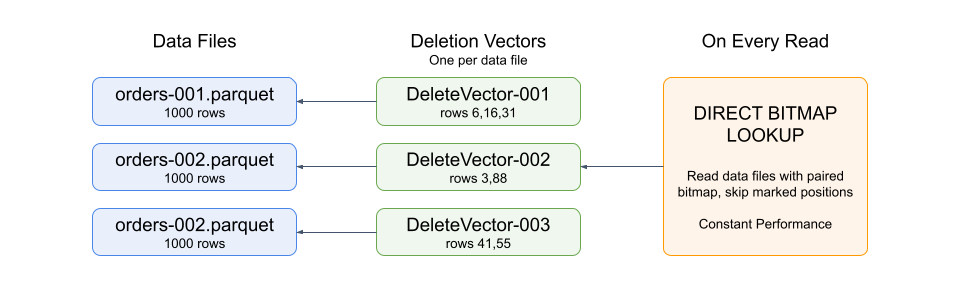

v3 replaces position delete files with deletion vectors: compact bitmaps stored in Puffin files (an Iceberg metadata file format) with a direct 1:1 mapping between each deletion vector and its associated data file. This eliminates the join overhead during reads and reduces both query latency and memory pressure.

Configure Deletion Vectors

Deletion vectors are used when a DML operation is configured for merge-on-read mode on a v3 table. The write.delete.mode, write.update.mode, and write.merge.mode table properties control DELETE, UPDATE, and MERGE statements respectively. Each defaults to copy-on-write.

ALTER TABLE my_catalog.db.events SET TBLPROPERTIES (

'write.delete.mode' = 'merge-on-read',

'write.update.mode' = 'merge-on-read',

'write.merge.mode' = 'merge-on-read'

);

The two modes can coexist on the same table. When a copy-on-write operation rewrites a data file, it applies any existing deletion vectors for that file, producing a clean data file without deleted rows.

Choose Between Copy-on-Write and Merge-on-Read

The choice depends on your workload:

-

Copy-on-write rewrites entire data files during DML operations. Writes are slower but reads are fast because data files contain only current rows.

-

Merge-on-read records deletions separately without rewriting data files. Writes are faster but reads must apply deletion vectors to filter out deleted rows.

With v3 deletion vectors, the read penalty for merge-on-read is significantly reduced compared to v2. This makes merge-on-read a more attractive option for workloads with frequent deletes or updates.

You can configure each operation type independently based on how frequently you use each operation and your latency requirements for writes versus reads.

Apply Deletion Vectors

Deletion vectors are soft-deletes—they mark rows as removed without rewriting data files. As deletion vectors accumulate over time, read overhead increases because Dremio must apply them during query execution. Regular file rewrites keep this overhead in check by producing clean data files that incorporate the deletions.

Deletion vectors are applied when data files are rewritten. This happens during:

- Compaction — Automatic Optimization, when enabled, applies deletion vectors as part of its ongoing maintenance cycles.

OPTIMIZE TABLEtriggers compaction manually for a one-time cleanup. - Copy-on-write DML — When a

DELETE,UPDATE, orMERGEoperation runs in copy-on-write mode, it applies any existing deletion vectors for the files it rewrites, producing clean data files.

After data files are rewritten, the original files and their associated Puffin files (which contain the deletion vectors) remain on storage until they are removed. Use VACUUM TABLE to expire old snapshots and delete these unreferenced files.

Upgrade from v2 to v3

When you upgrade a v2 table to v3, existing position delete files remain readable. The first merge-on-read operation (DELETE, UPDATE, or MERGE) after the upgrade converts all existing v2 position deletes into deletion vectors.

Variant Type

The VARIANT data type stores semi-structured data such as JSON, allowing you to query nested and dynamic structures without defining a fixed schema. Using a VARIANT column in a table definition automatically creates the table as v3, or upgrades an existing table to v3 when added with ALTER TABLE.

For syntax and functions, see VARIANT.

Row Lineage

Iceberg v3 tables automatically track the lineage of individual rows using two metadata columns. Row lineage is required on all v3 tables and cannot be disabled.

_row_id– A unique identifier assigned to each row when it is first inserted. Updated rows retain their original_row_id._last_updated_sequence_number– The snapshot sequence number of the operation that last wrote the row. This value is set on insert and updated when the row is modified byUPDATEorMERGE.

Dremio writes row lineage metadata automatically through all DML operations and table maintenance. OPTIMIZE preserves both row lineage values when rewriting data files. Because row lineage is part of the open Iceberg specification, this metadata is stored in the table's Parquet files and can be queried by engines that support reading row lineage columns, such as Apache Spark 4.1 or later.

When you upgrade a v2 table to v3, existing rows have a null _row_id. Rows inserted after the upgrade are assigned _row_id values automatically.

Default Column Values

Iceberg v3 introduces support for default values on table columns. Default values simplify schema design and data insertion by automatically providing values when a column value is not specified.

When you add a column with a default value to an existing table, existing rows return the default value for the new column, and new rows use the default value when the column value is not specified.

Adding a column with a default valueALTER TABLE orders ADD COLUMNS (status VARCHAR DEFAULT 'pending');

You can change the default value for new writes using ALTER TABLE ... MODIFY COLUMN. When you change a default, it applies only to new writes that do not specify the column value. Existing rows continue to use the default from the original column definition.

For syntax details, see CREATE TABLE and ALTER TABLE.

COPY INTO will continue to write NULLs if a value is not found for a column.

Supported Data Types

Default values are supported for primitive-type columns only; complex-type columns (arrays, maps, structs) are not supported. These are the supported primitive types:

- BIGINT

- BOOLEAN

- DATE

- DECIMAL

- DOUBLE

- FLOAT

- INT

- TIME

- TIMESTAMP

- UUID

- VARBINARY

- These must be set using the following syntax

x'yourhexvalue'

- These must be set using the following syntax

- VARCHAR

Schema

Define advanced schema features on Iceberg tables using Dremio SQL commands. For information on how Dremio uses these definitions during automatic table maintenance, see Automatic Optimization.

Clustering

Clustering sorts records within data files based on one or more columns using Z-ordering. This allows the query engine to use Parquet column statistics to skip entire files that don't match query filters, reducing the amount of data scanned.

Clustering vs. Partitioning

Partitioning physically splits data into separate directories by partition value. While effective for low-cardinality columns like region or date, partitioning has well-known drawbacks: partitioning on high-cardinality columns produces thousands of small files, low-cardinality columns can create skewed partitions where some are much larger than others, and multi-column partitioning compounds both problems. Choosing the wrong partition column is expensive to fix because it requires rewriting the entire table.

Clustering avoids these issues. Because it sorts records within files rather than splitting them across directories, file sizes remain uniform regardless of column cardinality. You can cluster on multiple columns without combinatorial explosion, and changing clustering keys only requires re-optimizing the table—not rewriting the partition layout.

Dremio recommends clustering for most workloads. Partitioning is still useful when queries consistently filter on a single low-cardinality column such as a date, or when downstream systems depend on a directory-based layout.

Choose Clustering Keys

Choose columns that are commonly used in query filters or that serve as primary keys. Order them by filtering frequency and cardinality, with the most commonly queried high-cardinality columns first.

Clustering keys must be columns in the table. Transformations are not supported. See the CREATE TABLE and ALTER TABLE pages for the CLUSTER BY SQL syntax.

Data Types for Clustered Columns

Dremio Iceberg clustering supports clustered columns of the following data types:

- DECIMAL

- INT

- BIGINT

- FLOAT

- DOUBLE

- VARCHAR

- VARBINARY

- DATE

- TIME

- TIMESTAMP

CTAS Behavior and Clustering

When running a CREATE TABLE AS statement with clustering, the data is written in an unordered way. For the best performance, run an OPTIMIZE TABLE command after creating the table.

For details on how Dremio maintains clustering during ongoing table maintenance, see Automatic Optimization.

Partition Evolution

Partition evolution allows you to modify partition layouts in a table as data volume or query patterns change, without needing to rewrite the entire table.

You can define partition specifications when creating a table with CREATE TABLE and modify them with ALTER TABLE. For information on how Dremio rewrites files to align with partition specifications during optimization, see Automatic Optimization.

Time Travel and Rollbacks

Iceberg tables maintain a history of snapshots, enabling you to query previous versions of your data or roll back to an earlier state.

Time Travel

Use the AT SNAPSHOT or AT TIMESTAMP syntax in your SELECT queries to query historical versions of a table:

SELECT * FROM my_table AT TIMESTAMP '2025-01-15 10:00:00';

SELECT * FROM my_table AT TIMESTAMP '2025-01-15 10:00:00';

Time travel depends on snapshot retention. When snapshots are removed—either by VACUUM TABLE or by automatic expiration controlled by the history.expire.max-snapshot-age-ms and history.expire.min-snapshots-to-keep table properties—the corresponding table versions are no longer available for time travel queries.

Rollbacks

Use the ROLLBACK TABLE command to restore a table to a previous state. Each DML or DDL operation creates a new snapshot, and rollbacks redirect the table's metadata pointer to an earlier one.

ROLLBACK TABLE my_catalog.db.events TO SNAPSHOT '4758923048671023905';

ROLLBACK TABLE my_catalog.db.events TO TIMESTAMP '2025-01-15 10:00:00';

Rollbacks create a new snapshot identical to the target. All intermediate snapshots are preserved and remain available for time travel queries until they expire or are removed by VACUUM TABLE.

If DML operations are running when a rollback executes, those operations can fail because the snapshot ID has changed. SELECT queries in progress will complete normally.

Table Management

Dremio provides Automatic Optimization, which automatically maintains Iceberg tables in the Open Catalog using a dedicated engine. This includes data file compaction, partition evolution, manifest file rewriting, and row-level delete handling.

For manual table maintenance:

- Use

OPTIMIZE TABLEto manually compact files or reorder data for clustered tables. - Use

VACUUM TABLEto expire old snapshots and then remove the unreferenced files.VACUUM TABLEfrees storage, but it also controls your time travel window—once a snapshot is expired, that table version is no longer queryable.

Incremental OPTIMIZE

OPTIMIZE TABLE processes tables incrementally by default. Rather than attempting to rewrite an entire table in a single operation, OPTIMIZE works in resource-aware batches, ensuring it completes successfully even on very large or heavily skewed tables.

Each OPTIMIZE run is limited by two factors:

| Limiting Factor | Description |

|---|---|

| Bytes rewritten | Scales with the target engine size; represents total work per run |

| Input file count | Capped based on executor heap memory to prevent OOM errors |

Each DataFile object requires approximately 50 KB of memory (for a table with ~100 columns). The default file count limit is approximately 200,000 files, corresponding to a ~10–12 GB memory footprint.

Behavior based on table size:

- Small-to-medium tables / already-optimized tables —

OPTIMIZEprocesses the entire table in a single run. - Large, unoptimized tables — Multiple

OPTIMIZEruns are required. Each run commits progress before stopping. Re-runOPTIMIZE TABLE <table_name>until the output confirms the table is fully optimized.

Check the Optimization State

You can inspect the current optimization state of a table using Iceberg metadata tables:

Check optimization state-- Check file size distribution

SELECT file_size_in_bytes, count(*) AS file_count

FROM TABLE(table_files('<table_name>'))

GROUP BY 1

ORDER BY 1;

-- Check snapshot history

SELECT committed_at, operation, summary

FROM TABLE(table_snapshot('<table_name>'))

ORDER BY committed_at DESC;

Table Properties

The following Apache Iceberg table properties are supported in Dremio. You can use these properties to configure aspects of Apache Iceberg tables:

| Property | Description | Default |

|---|---|---|

| commit.manifest.target-size-bytes | The target size when merging manifest files. | 8 MB |

| commit.status-check.num-retries | The number of times to check whether a commit succeeded after a connection is lost before failing due to an unknown commit state. | 3 |

| compatibility.snapshot-id-inheritance.enabled | Enables committing snapshots without explicit snapshot IDs. | false (always true if the format version is > 1) |

| format-version | The table's format version defined in the Spec. Options: 1, 2, or 3 | 2 |

| history.expire.max-snapshot-age-ms | The maximum age (in milliseconds) of snapshots to keep as expiring snapshots. | 432000000 (5 days) |

| history.expire.min-snapshots-to-keep | The default minimum number of snapshots to keep as expiring snapshots. | 1 |

| iceberg.enableVariantShredding | Enables shredding of VARIANT data during Parquet writes. Shredding improves read performance by storing VARIANT fields in typed columns, but increases write overhead. Set to false to disable shredding for write-heavy workloads. | true |

| write.delete.mode | The table's method for handling row-level deletes. copy-on-write rewrites data files. merge-on-read records deletions separately—using position delete files on v2 tables or deletion vectors on v3 tables. Options: copy-on-write or merge-on-read | copy-on-write |

| write.merge.mode | The table's method for handling row-level merges. copy-on-write rewrites data files. merge-on-read records deletions separately—using position delete files on v2 tables or deletion vectors on v3 tables. Options: copy-on-write or merge-on-read | copy-on-write |

| write.metadata.compression-codec | The Metadata compression codec. Options: none or gzip | none |

| write.metadata.delete-after-commit.enabled | Controls whether to delete the oldest tracked version metadata files after commit. | false |

| write.metadata.metrics.column.col1 | Metrics mode for column col1 to allow per-column tuning. Options: none, counts, truncate(length), or full | (not set) |

| write.metadata.metrics.default | Default metrics mode for all columns in the table. Options: none, counts, truncate(length), or full | truncate(16) |

| write.metadata.metrics.max-inferred-column-defaults | Defines the maximum number of top-level columns for which metrics are collected. The number of stored metrics can be higher than this limit for a table with nested fields. | 100 |

| write.metadata.previous-versions-max | The maximum number of previous version metadata files to keep before deleting after commit. | 100 |

| write.parquet.compression-codec | The Parquet compression codec. Options: zstd, gzip, snappy, or uncompressed | zstd |

| write.parquet.compression-level | The Parquet compression level. Supported for gzip and zstd. | null |

| write.parquet.dict-size-bytes | The Parquet dictionary page size (in bytes). | 2097152 (2 MB) |

| write.parquet.page-row-limit | The Parquet page row limit. | 20000 |

| write.parquet.page-size-bytes | The Parquet page size (in bytes). | 1048576 (1 MB) |

| write.parquet.row-group-size-bytes | Parquet row group size. Dremio uses this property as a target file size since it writes one row-group per Parquet file. Ignores the store.parquet.block-size and dremio.iceberg.optimize.target_file_size_mb support keys. | 134217728 (128 MB) |

| write.summary.partition-limit | Includes partition-level summary stats in snapshot summaries if the changed partition count is less than this limit. | 0 |

| write.update.mode | The table's method for handling row-level updates. copy-on-write rewrites data files. merge-on-read records deletions separately—using position delete files on v2 tables or deletion vectors on v3 tables. Options: copy-on-write or merge-on-read | copy-on-write |

You can configure these properties when you create or alter Iceberg tables.

For example, to control output file size, set the Iceberg table property:

Set target file sizeALTER TABLE <table_name>

SET TBLPROPERTIES ('write.target-file-size-bytes' = '268435456');

-- 256 MB

Dremio uses the Iceberg default value for table properties that are not set. See Iceberg's documentation for the full list of table properties. To view the properties that are set for a table, use the SQL command SHOW TBLPROPERTIES.

In cases where Dremio has a support key for a feature covered by a table property, Dremio uses the table property instead of the support key.

Related Topics

- Load Data Into Tables — Load data from CSV, JSON, or Parquet files into existing Iceberg tables.

- SQL Commands — See the syntax of the SQL commands that Dremio supports for Iceberg tables.